GPT-5.5 Is Here: What OpenAI's New Model Actually Changes for Coding, Research, and Computer Use

OpenAI launched GPT-5.5 on April 23, 2026. Here's what changed, why developers and power users should care, and where the new model looks genuinely more useful than GPT-5.4.

OpenAI introduced GPT-5.5 on April 23, 2026, with an April 24 update confirming API availability for GPT-5.5 and GPT-5.5 Pro. That alone makes it a major launch week story, but the more interesting part is the product direction behind it. OpenAI is not presenting GPT-5.5 as a prettier chatbot. It is pitching the model as a stronger worker across tools: code, browser research, spreadsheets, documents, and long-running multi-step tasks.

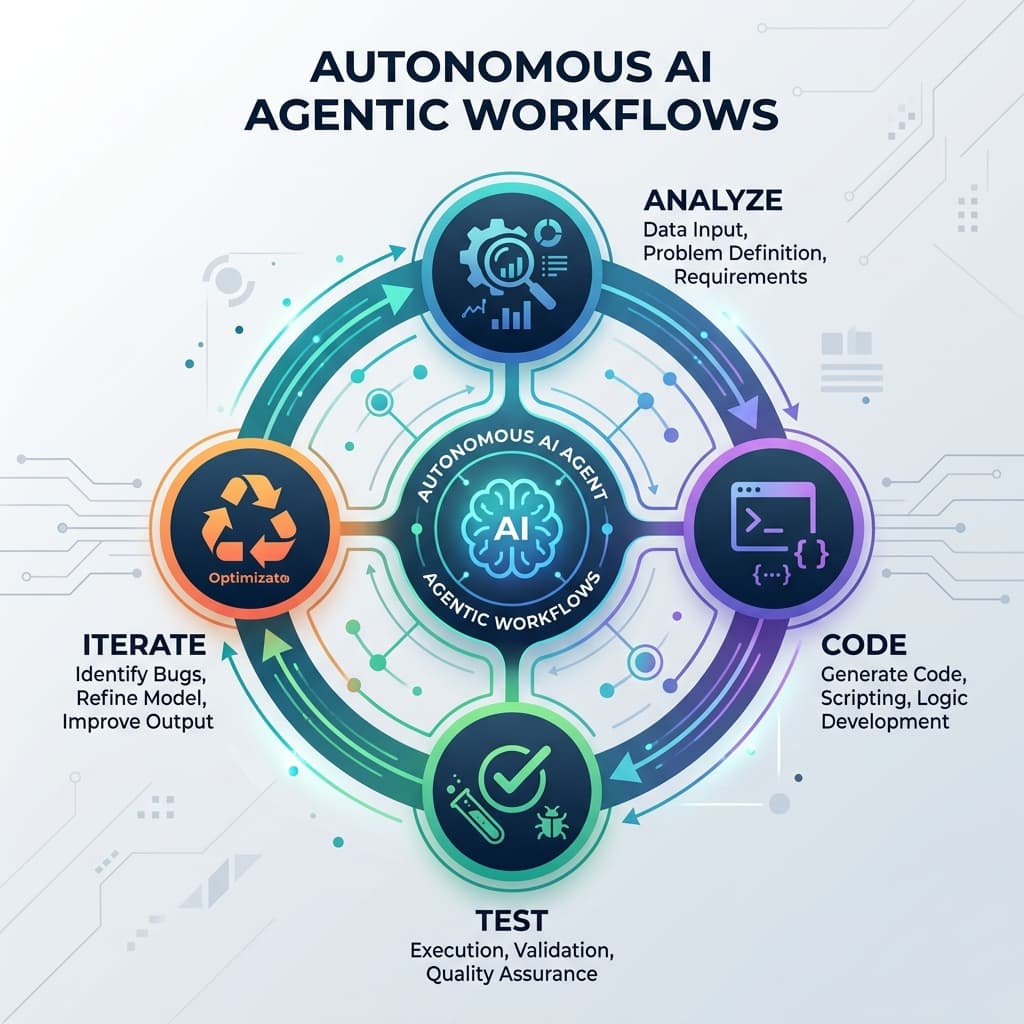

That matters because the AI market is no longer moving on benchmark wins alone. The real question in 2026 is whether a model can stay coherent while doing actual work. Can it debug code without losing the thread? Can it analyze information, move between tools, and finish a messy task without constant babysitting? OpenAI's GPT-5.5 announcement suggests that is the capability frontier the company now cares most about.

The Big Shift: GPT-5.5 Is About Completion, Not Just Conversation

The most important signal in the GPT-5.5 launch is how OpenAI describes the model's job. The company says GPT-5.5 is better at planning, using tools, checking its own work, navigating ambiguity, and carrying work forward until a task is finished. That sounds subtle, but it is a meaningful shift from the old pattern where an AI system gives a strong answer and then waits for the next instruction.

In practice, this is the difference between:

- getting a code suggestion

- getting a model that can inspect the codebase, make a change, run tests, notice the failure, adjust the patch, and keep going

That same pattern extends beyond software engineering. OpenAI is explicitly positioning GPT-5.5 for document-heavy tasks, spreadsheet work, operational analysis, and research workflows that unfold over multiple steps instead of a single prompt-response exchange.

Where GPT-5.5 Looks Strongest Right Now

Coding and technical workflows

OpenAI says GPT-5.5 is its strongest agentic coding model so far. In the launch post, the company highlights gains on Terminal-Bench 2.0, SWE-Bench Pro, and internal long-horizon engineering evaluations. More importantly, the examples in the announcement are not toy demos. They focus on refactors, debugging, merge resolution, test-aware implementation, and broader system reasoning.

For working developers, that is the practical takeaway: GPT-5.5 appears designed to reduce the number of times you need to re-explain context or rescue the model after it drifts. If that holds up in daily use, the upgrade is less about flashier generations and more about fewer broken handoffs.

Knowledge work and analysis

OpenAI is also pushing GPT-5.5 as a model for general computer-based work. The company describes it as stronger at turning messy inputs into useful outputs, including documents, spreadsheets, and operational analysis. That may sound broad, but it lines up with what many teams actually want from AI right now: fewer "here's a draft" moments, more "here's the finished first pass."

That is especially relevant for people working in product, operations, marketing, finance, consulting, and technical program roles. If the model can reliably synthesize raw information, structure it, and push it into usable business artifacts, that is a much bigger productivity unlock than incremental chat quality alone.

Research and scientific work

Another notable part of the launch is how aggressively OpenAI is framing GPT-5.5 as a research assistant for serious technical work. The announcement highlights gains on GeneBench and BixBench, plus examples involving mathematical and biological workflows. That does not mean researchers should blindly trust outputs, but it does suggest the company believes GPT-5.5 is crossing into more demanding analysis loops where interpretation, iteration, and evidence gathering matter.

For technical users, this is a sign to test the model on workflows that were previously too fragile for general-purpose assistants: literature review synthesis, code-backed analysis, dataset interpretation, and complex notebook planning.

Why This Launch Matters More Than Another Benchmark Round

Plenty of AI launches promise better intelligence. GPT-5.5 matters because OpenAI is trying to combine three things that do not always arrive together:

- stronger reasoning

- strong tool use

- similar real-world latency to the previous generation

OpenAI says GPT-5.5 matches GPT-5.4's per-token latency in real-world serving while also using fewer tokens on Codex tasks. If true, that is a serious product advantage. Teams do not only want a smarter model. They want a smarter model that does not feel slower, more expensive, or harder to operationalize.

The April 24, 2026 API update also matters for adoption speed. A launch can generate headlines, but the real test starts once developers can wire the model into production systems, compare costs, and see whether the autonomy gains survive outside carefully selected demos.

What Developers and Power Users Should Test First

If you have access to GPT-5.5 this week, the best evaluation is not a generic prompt shootout. Give it work that usually exposes model weakness:

1. Ambiguous bug fixing

Ask GPT-5.5 to investigate a bug with incomplete context, then see whether it identifies likely causes, proposes a verification path, and updates its approach after new evidence appears.

2. Multi-file implementation

Use a feature request that touches multiple modules, tests, or configuration layers. Stronger agentic behavior should show up in how well the model keeps the whole system in view.

3. Messy research synthesis

Give it notes, links, partial conclusions, and supporting documents. Then evaluate whether it can turn scattered material into a clean internal memo, plan, or recommendation without flattening important nuance.

4. Spreadsheet and document generation

OpenAI is clearly signaling that these are priority use cases. This is worth testing directly, especially if your workflow involves weekly reporting, analysis packs, or cross-functional summaries.

The Real Tradeoff to Watch

The biggest open question is not whether GPT-5.5 is better than GPT-5.4. It almost certainly is in many workflows. The real question is how much trust users can place in a model that now does more work autonomously.

When models become more persistent and more capable across tools, reliability matters more, not less. A system that can act longer without supervision can save hours, but it can also take a wrong path with more confidence. OpenAI's emphasis on stronger safeguards and additional cybersecurity classifiers reflects that tension directly.

So the practical stance is straightforward: use GPT-5.5 as an accelerator, not a substitute for judgment. The model looks especially promising for first-pass execution, systems reasoning, and tool-driven workflows, but important work still needs verification, especially in production engineering, research, and security-sensitive contexts.

Bottom Line

GPT-5.5 looks like a meaningful release because it pushes AI closer to dependable task completion rather than better one-turn answers. For developers, analysts, and power users, that is the upgrade that actually changes daily work.

If GPT-5.4 felt capable but still a little brittle on long-running tasks, GPT-5.5 is worth testing immediately. The headline is not just that OpenAI made a smarter model. It is that the company is trying to make a model that can carry more of the work itself, across code, documents, research, and real computer use.